08th May 2026, Nipuna Weerasinghe

From Ambition to Accountability: A Practical Roadmap for Operationalising Responsible AI in Your Organisation

For C-suite executives, AI strategists, and governance leaders who are done with the theory and ready for the work.

There’s no shortage of AI ethics frameworks, white papers, or keynote speeches on responsible AI. What is in short supply are organisations that have actually done the hard work of putting it into practice.

From what I’ve seen, the gap between declaring AI principles and truly operationalising them isn’t about understanding. It’s about execution. And closing that gap takes a structured, practical approach not another framework document sitting untouched somewhere in the organisation.

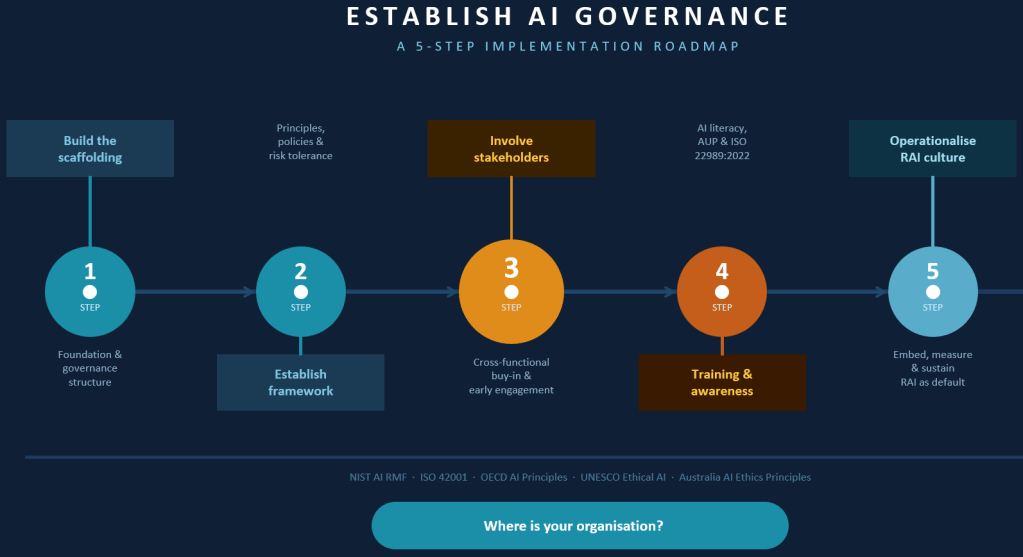

Here is the five-step roadmap you should use. It is grounded in established frameworks, including the NIST AI RMF, ISO 42001, OECD AI Principles, Fair Information Principal (FIPS), and UNESCO’s Recommendation on Ethical AI, and it is written for leaders who need to make real decisions in real organisations.

Step 1: Build the scaffolding

Before you can govern AI, you need to know what your AI strategy is, which will define the shape of your AI governance model. I will cover in a separate blog post how to define an AI strategy for your organisation; in this one, I will focus on road map for establish a proper AI governance framework.

Like building a house, you need an accurate engineering design and plan with the right foundations based on what you want. You can’t lay a foundation and then decide you need a double-storey house. Similarly, without this first step, every subsequent step can collapse.

You cannot govern what you have not mapped, and you cannot be accountable for what you have not owned. Ok the question is where we should start our AI governance journey, always start from what you already have.

Most organisations already have mature cybersecurity, privacy, and GRC processes in place. AI governance does not need to be invented in isolation; it should be built on top of these existing structures and adapted for the unique characteristics of AI systems.

You may argue that AI governance and security are nothing more than your existing data and security controls. While that is correct to some extent, it is not the full picture. I will discuss how data and security controls impact AI in more detail later.

The first practical actions at this stage are:

- Foster a genuine governance community. This is not a committee of IT leaders. It needs to be a broader group of stakeholders privacy, legal, cyber security, HR, business units, and where relevant, external experts. AI governance that is owned by technology alone will always fail to address the organisational, ethical, and human dimensions of the problem.

- Establish your RACI. Who is accountable for AI decisions? Who is responsible for monitoring? Who needs to be consulted? Who needs to be informed? Until these questions are answered explicitly, accountability will fall through the cracks.

- Create the right incentives. Establish metrics to measure performance and demonstrate where you are on your roadmap and how close you are to achieving your objectives. Executives respond to evidence and measurement. Build the measurement framework early.

- Choose your governance model deliberately. There is no universally right answer here centralised models offer consistency and control, decentralised models offer agility and business-unit relevance, hybrid models attempt to balance both. What matters is that the choice is conscious and fits your organisation’s structure and culture.

- Evolve continuously. Build strong feedback loops from day one. AI governance that is designed once and left static will be obsolete within eighteen months.

Remember the key is foundation needs to be built to adapt.

Step 2 : Establish your framework

Once the scaffolding is in place, the framework gives your AI governance its shape and substance.

There is an important distinction here that many organisations miss. Principles are values and beliefs held to be important to the organisation. Frameworks are how those principles are operationalised. One without the other is incomplete, principles without frameworks are aspirational, frameworks without principles are mechanical. It is like a ship at sea without a lighthouse, direction exists, but there is no guidance.

You can establish your own framework or adapt existing ones. NIST AI RMF, ISO 42001, OECD AI Principles, and Australia’s AI Ethics Principles are all credible starting points. What matters is that you do not simply adopt as it is. The framework must be tailored to your organisation and that tailoring requires honest answers to several critical questions.

- What are your guiding principles and values? These are not marketing statements. They are the beliefs that will guide difficult decisions when AI systems produce unexpected outcomes, when business pressure conflicts with ethics, and when there is no clear right answer.

- What is your risk tolerance? This question needs to be answered at board level. AI risk looks different in a financial institution, a healthcare provider, a university, and a consumer technology company. Risk tolerance informs everything else your policies, your controls, your appetite for automation, your human oversight requirements.

- What regulatory obligations apply? Your jurisdiction matters enormously here. The EU AI Act, Australia’s Privacy Act, sector-specific regulations in financial services and healthcare these are not optional considerations. They are design constraints.

- What is your relationship with AI? This is where you are strategy comes to play. Are you an AI developer, deployer, or user? Each carries different obligations. And what is AI’s purpose in your organisation what business problems is it solving, which departments are using it, and how does it connect to your broader strategy?

- What is your ability to implement? Many governance frameworks fail not because they are poorly designed but because they exceed the organisation’s capacity to operationalise them. Size of organisation, leadership support, stakeholder buy-in, available expertise, and budget all constrain what is realistic. Design for where you are, with a roadmap to where you want to be.

Step 3 : Involve stakeholders

If there is one step that separates organisations that build enduring AI governance from those that build elaborate documents, it is this one.

Responsible AI governance is like flying an aircraft; pilots, engineers, air traffic controllers, safety inspectors, and passengers all play a role. Technology alone is not enough to ensure a safe journey. Do not treat AI governance is not a technology project. It requires data scientists, ML engineers, privacy professionals, legal counsel, HR leaders, identity and access specialists, and yes the C-suite. And critically, it requires the people who will be affected by AI decisions to have a genuine voice in shaping them.

The practical advice here is simple but frequently ignored: involve people early. Not after the framework is designed. Not after the policies are written. Early when the design decisions are still being made and input can genuinely change the outcome.

Several things become possible when you do this well. First, you secure leadership buy-in. And the most effective way to do that is not to present a governance framework it is to use tools like the MITRE AI assessment to analyse your current maturity, identify gaps, and then demonstrate concretely how AI governance will help close those gaps and manage real business risk. Executives buy governance when they understand what they are buying it for.

Second, you surface hidden risks. The people closest to how AI is actually being used in day-to-day operations know things that the governance team does not. Their involvement is not a courtesy it is a source of critical intelligence.

Third, you build the cultural foundation for Step 5. Organisations where people feel they were involved in building the governance are far more likely to actually follow it.

Step 4 : Provide training and awareness

An AI governance framework that people do not understand is not a governance framework. It is a liability.

Training and awareness at this stage needs to cover a lot of ground your organisation’s AI governance approach, real-world use cases, the support systems available, implementation guidance, security and privacy obligations, risks, and acceptable use policies.

ISO 22989:2022 is worth noting here it defines 100 key concepts related to AI that provide a shared vocabulary across the organisation. When your executives, your lawyers, your data scientists, and your HR team are using different definitions for the same words, governance breaks down. A shared vocabulary is not a minor administrative detail it is the foundation of coherent decision making.

Training also needs to be differentiated. C-suite executives need strategic literacy understanding AI risk at a governance and accountability level. Technical teams need applied ethics and safety. Every employee who interacts with AI tools in their daily work needs to understand their obligations, the boundaries of acceptable use, and what to do when something does not feel right.

The organisations that do this well treat AI literacy not as a one-time onboarding exercise but as a continuous investment updated as the regulatory landscape evolves, as new AI tools are deployed, and as the organisation’s understanding of AI risk matures.

Step 5 : Operationalise Responsible AI (RAI) culture

This is the hardest step and the one that most governance programmes never fully reach. It is also the only one that actually matters in the long run.

Operationalising a responsible AI culture means making RAI the default way your organisation thinks, decides, and acts not a compliance layer applied on top of normal operations. It means AI governance considerations are embedded in procurement, in product development, in HR processes, in customer experience design, and in the boardroom.

Three things are essential at this stage.

- Recognise and design for cultural diversity and organisational context. What responsible AI looks like in practice will vary across business units, geographies, and functions. Governance that ignores cultural context will be resisted or circumvented. Design for the organisation you have, not the idealised organisation you wish you had.

- Define RAI as both a business imperative and an ethical one. Trustworthy AI is not just the right thing to do it is a competitive differentiator, a risk management tool, and increasingly a licence to operate in regulated markets. When your C-suite understands this, RAI stops being a compliance cost and starts being a strategic investment.

- Work with HR to identify performance metrics and incentives for responsible AI. Culture follows incentives. If people are only rewarded for speed and scale, responsible AI will always lose to commercial pressure. Building RAI considerations into performance frameworks, promotion criteria, and leadership expectations is what makes the culture real.

Create playbooks and implementation guides for every part of the organisation that uses AI. Abstract principles do not help a marketing manager decide whether a particular use of customer data is appropriate. Concrete, practical guidance does.

The honest reality

Most organisations reading this are at Step 1 or Step 2. Some are at Step 3. Very few have genuinely operationalised a responsible AI culture. That is not a failure it reflects how hard this work actually is and how fast the landscape is moving.

Fortune favours the prepared; the organisations that will build enduring advantage in AI are not the ones that move fastest. They are the ones that build the governance foundations that allow them to move with confidence scaling AI responsibly, maintaining stakeholder trust, and adapting as regulation, technology, and societal expectations continue to evolve.

The roadmap is clear. The question is whether your organisation is ready to do the work. As always, ensure your foundation is correctly laid and robust.